For about a year now, ever since I started using GPT, I’ve received various criticisms about my mentions of AI. They are varied: some question the way I use it to bring my imagination to life in my illustrations; others comment on the “psychological” feedback from GPT that I quote; and still others question its role as a proofreader. The topic is timely, so I thought I would clarify a few of these points.

I am not trying here to defend AI, nor to convince anyone to use it. I simply wanted to explain what it has changed for me: how a tool can become a mirror, a help, or an unexpected extension of human thought.

I am aware that AI divides opinions. GPT had the effect of a storm in the world of computing in 2022. On my side, I sometimes speak of it as a revolution comparable to the democratization of personal computing for Generation X. By that I mean that adopting AI seems to me just as necessary as adopting computers was at the time. Those who refuse or miss the shift might find themselves left behind. Think of your grandparents who always struggled with computers that felt beyond them.

For me, AI is somewhat comparable. Not necessarily in the same way, however, because tools like ChatGPT are surprisingly easy for anyone to use. But I do think its adoption in professional environments will have just as much impact. On one hand, computing could seem difficult for our elders, but using AI efficiently, such as GPT, can be just as challenging.

For my part, I found myself fascinated by AI, because it touched my field—computing—and because I had absolutely no idea how it worked. I wanted to understand it. And above all, I wanted to test it. And test it I did: very extensively and thoroughly, purely for the pleasure of exploring its limits.

GPT as a research tool

My choice naturally fell on ChatGPT because few other AIs were competing with it at the time, and the more I conversed with it, the harder it became to turn to something else, given how much GPT already knew about me. A small aside about Claude, this AI that keeps raising funds. I have tested it and recommend it.

At first, GPT simply answered my questions quickly. It often proved useful when I heard something that surprised me. Often, I have an intuition when something sounds like false information (not in every field). Before, I would go to Google, skim a few links, and get my answer. GPT quickly became my new weapon of choice. Since I was lazy and often already suspected that if I was questioning a piece of information it was probably wrong, I simply asked GPT, which did the synthesis work for me.

It was efficient, and I kept Google for searches that truly interested me, or when I needed to dig deeper into what GPT was telling me, or even when what GPT said seemed doubtful. So it was only a research tool, but I went from “Mr. Google” to “Mr. GPT,” to the point of having to hide my sources because of the growing controversies related to AI errors (ironic to realize that people blindly trust other people who know nothing about a subject, yet doubt an AI trained on billions of pieces of information deemed reliable). It was only later that I began to use it more intensively.

When GPT becomes my second psychologist

After repeatedly having to help me with social situations, my best friend at the time urged me several times to use GPT for help. I followed his advice for the first time around February 2025, to judge a conflict with other friends. Surprise: its response was constructive, relevant, and its proposed solution seemed reliable. It was not yet calibrated to my personal model but still managed to respond to my request.

I then started submitting more and more social interactions that puzzled me, and its responses became increasingly precise and increasingly adapted to my way of communicating. I sometimes mentioned to my parents where my ideas were coming from, and each time they told me to “stop talking about GPT, it’s not a human.” The reality is that GPT has sometimes given me responses that felt more human than humans themselves (and that is something to be careful about).

My father then told me to ask my psychologist what she thought about GPT. So I did. Unfortunately for him, her answer was: “Yes, it’s very good. Studies show its value in therapy and you have constant access to it [while you only see me every three weeks].” Nature indeed describes the potential interest of using GPT in therapy. Another article from Forbes reports a study conducted with 830 participants, assigned either to ChatGPT or to a therapist. The surprise: the results favored GPT.

As often happens with many people, being wrong is not enough to change their opinion. He maintains that GPT lacks emotional understanding and cannot respond well to everything. I move on, go deeper into my use of AI, and explain a large part of my life to it in detail: autism, bipolarity, friendships, family, special interests, so that it can be better equipped to respond in the future.

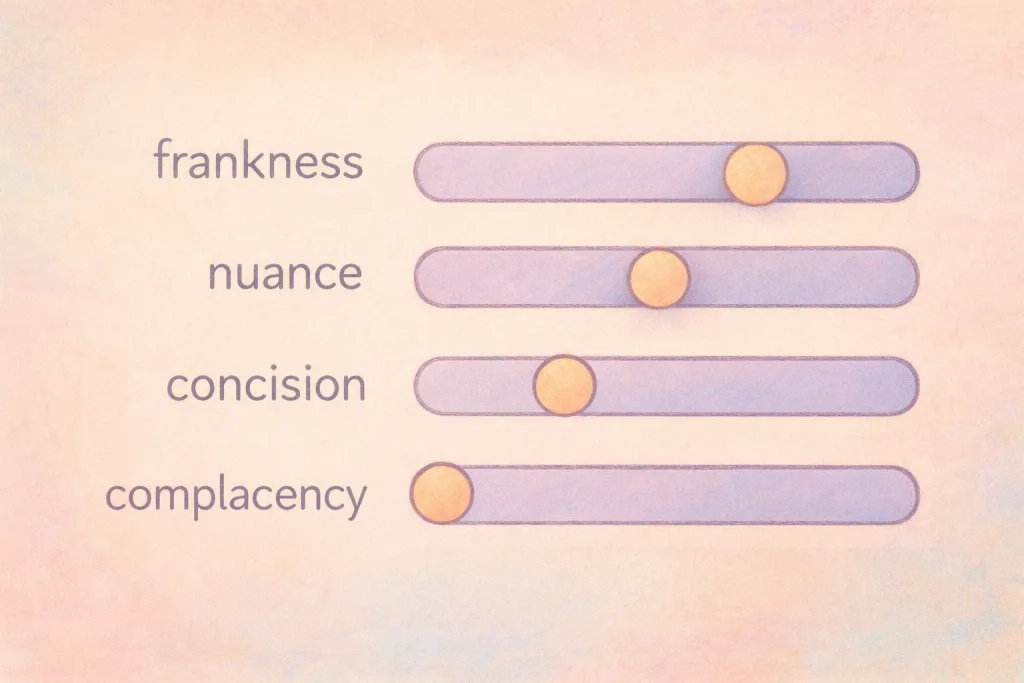

Shaping GPT to be direct

I then started hearing more and more that people should protect themselves from GPT because it supposedly always agrees with the user. Time for an explanation: there is some truth to that, or at least the perception of it. I asked GPT directly, and it explained that it was trained to be cooperative, especially when responding to sensitive messages. Moreover, it is trained to begin by reformulating the request, which can give the impression that it is agreeing with the user.

How questions are asked

This has never happened to me. I understand why: my questions are never leading. They always present several points of view and ask GPT to take a position. Instead of asking “I am handsome. What do you think?”, I ask “I have doubts about what I look like physically. I have physical qualities, but I also know I have flaws. Here is the photo, what do you think?” I immediately force the AI to position itself from a general, non-leading question. GPT confirmed to me that this influences its response. It explains that it answers the request precisely, not necessarily by taking another perspective.

A friend once sent me proof that something worked because he had asked GPT “Give me evidence that this works,” which it did, despite the doubtful nature of the evidence. I asked the opposite question: “Give me evidence that this does not work,” and I also got an answer. I then asked, “Based on reliable evidence, can you tell me whether this works?” and it responded precisely. The request affects the result. Just as Google would increasingly provide misinformation to someone who mainly researches on unreliable websites. AI is not magic.

Shaping GPT in my image

With that in mind, I set out to shape GPT in my image: to be a source of answers that is as reliable, direct, and raw as possible. I wanted it to tell me what it thought about my problems, without ever sparing me or worrying about hurting me. After sending multiple prompts, it was ready. It worked: when I raised a problem, either I was indeed right, or I was not, or there were two ways of approaching the problem and it explained them. GPT only took my side if it considered that to be the appropriate answer.

I think back to the people around me who used to laugh at seeing me rely on AI, but in the end something came out of it: before that, I kept getting into social conflicts, and they drastically decreased once I started using GPT. I understood better where the conflicts came from and how allistic people perceived things, and it was explained using my language, a language I understood.

When GPT speaks my language

GPT expressed itself with my words. Perhaps robotic, but I understood this robotic language better than the very fast interactions of a real-time conversation. In fact, the more I spoke with it, the more it learned to react with a language close to mine until the two almost merged. There were times when I felt like copying and pasting a GPT response into a conversation (but I love writing, so I never did); it would probably have gone unnoticed.

GPT, the missing social manual

Still, people reproached me or made remarks about this use. Yet it has a logic that can almost be hurtful when the other person refuses to understand it. For me, and for many autistic people, AI is the social cognition support we were missing at birth. It must be remembered: I was born without the manual for social interactions. I had to learn through mistakes how to function in society, and even today, I still stumble.

GPT was a lifesaver for me. I no longer needed to bother my loved ones with my questions. I just had to send a few messages and understand more precisely an interaction that had confused me. It is almost ironic: those same people who said they were tired of having to help me socially were the first to be offended by the technological alternative I had chosen.

Other autistic people discuss their use of GPT for similar purposes on online communities or forums such as Reddit.

Using GPT daily

I eventually subscribed because I was easily reaching the message limits, and so I learned to rely very often on GPT’s opinion or reflections, always with my own critical distance of course, being careful about what I read. It was the support I had been looking for for two decades. The psychologist I had never found (I exaggerate, because my psychologist who recommended it to me is exceptional, but GPT is a good complement). I asked it all kinds of questions, thoughts, and introspections. It even came to understand my humor and explain why yes, I was funny, but why others might miss it or be puzzled by it.

An engine for introspection

I have already mentioned this, but in July 2025, I wrote a book during a hypomanic episode (which I hope to get published). It is a condensed work of introspection born from all my sessions with my psychologist, but especially from everything I exchanged with GPT. Whether it was understanding my visual simulation thinking, my pattern thinking, the extent of my highly logical way of approaching the world, my relationship with addictions—everything went through it.

This book would never have existed without my exchanges with GPT. I obviously wrote the entire book myself, while taking a critical look at those exchanges. Those discussions also fed the ones I had with my psychologist. The two were complementary. I arrived at each session with a better understanding of myself, and I progressed more in a few months than in the two years of therapy before that. She told me so herself.

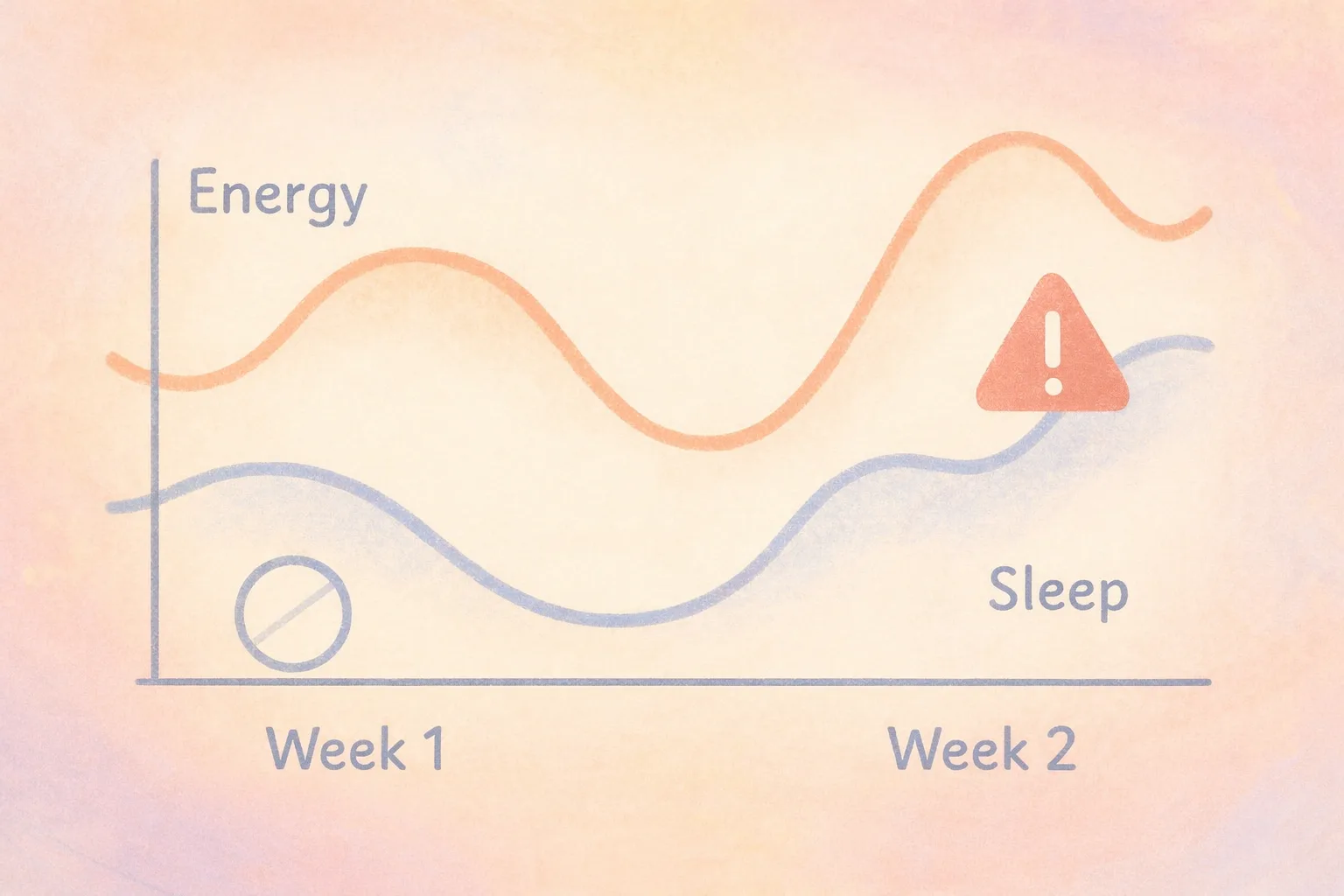

When GPT diagnoses my episodes (and I reject them)

If GPT was my greatest support in 2025, it was mainly by responding honestly (as it reminded me, “as you asked me to”) to my questions about my mental states. I experienced several manic episodes, and GPT was the first to raise concerns about the signs. Sometimes it even placed very visible warnings encouraging me to call emergency services or at least resume my medication. Sometimes I read those messages and ignored the parts that did not interest me (the warnings), but sometimes I followed its recommendations.

I avoided a psychotic decompensation thanks to this, realizing that I had forgotten my medication, that I was about to forget it again, and urgently taking it. Each time, even when the medical system missed it, GPT saw the signs. In the end, its ability in psychiatry or psychology is logical: both rely on recognizing patterns of behaviors or mental states, and AI excels at pattern recognition. GPT was simply analyzing a list of behaviors I described to it and drawing a diagnostic conclusion.

What criticizing my use of GPT really means

Criticizing my use of AI therefore means criticizing the fact that I am autistic (since it serves as a social manual for me) and forgetting that it may have prevented a catastrophe (psychosis). I am not like everyone else: I have to navigate a world filled with interactions that I must analyze in real time, and on top of that face a disorder that did not give me a single day of respite in 2025. Find me a human psychologist who speaks my language and is available 24/7, and I will gladly turn away from AI. Until then, it is the perfect complement to my psychologist and my psychiatrist.

GPT as a trigger for psychosis?

Warning: This section discusses psychological distress in relation to AI. I would like to remind readers that GPT is neither a substitute for doctors or psychiatrists, nor for emergency services. Its responses should be interpreted with caution, and if you are yourself in an emergency situation, I recommend consulting qualified professionals.

Before moving on to a non-psychiatric topic, I wanted to make a short detour about an article a friend had sent me after our discussions about AI. The article mentioned that numerous psychiatrists were “raising the alarm” (to use its words) since the arrival of GPT because it allegedly tends to reinforce users, particularly in their delusions. The article suggested that GPT was the cause of multiple psychoses, even suicides. These cases are extreme and tragic, but they require a small clarification: the literature does not establish AI as a direct cause of psychosis.

People experiencing psychosis generally already have a vulnerable ground, a predisposition, or even an associated disorder (schizophrenia, bipolar disorder). Psychosis usually has multiple factors and does not arise from a simple conversation with AI. What AI can probably do, however, is reinforce someone who is already in the middle of a delusion in their delusional beliefs, possibly to the point, I imagine, of leading them toward suicide.

AI systems are nevertheless trained specifically not to reinforce someone in suicidal ideas and therefore are not truly responsible. It is still worth remembering that the use of AI can, in certain situations like these, be risky, since a person in distress may easily influence an AI. I tested this myself at the very beginning of a psychotic episode, and I did not succeed. GPT was firm, no matter what I said: “The trees were not talking to me.” Damn.

When people criticize my artistic use of it

This one I have experienced a lot. Whether from my family, who grew exasperated seeing me produce creative content with AI, or from people skeptical about its use on this blog itself. The first point does not interest me; it amuses me. The second point deserves clarification: I do not hide the use of GPT to generate the illustrations on this blog, but it must be understood that the work required to maintain the blog is enormous.

Furthermore, most of the articles that used AI-generated artwork were written during a manic episode, and I felt like a genius producing dozens of illustrations. I now use them much less.

The long work behind writing the blog

I spend many hours writing the articles, rereading them, formatting them so they are readable, and searching for my sources. I remember what I write about because I am passionate about it; remembering the links to my sources is another matter. Many of my sources are in English, and I also try to provide as much French data as possible, which implies additional research work. In total, a single article can easily approach ten hours of work, often continuously without taking a break.

I love what I do. I mainly write for my own pleasure and I am happy when I have readers, but it remains mentally exhausting. The logical next step is illustrating my articles. The articles are often long, and I would probably lose many readers if I delivered a text without images. The problem is that I have almost no skills in graphic design, or even in drawing. What I do have are many wonderful—and sometimes unusual—ideas for representing my text, and a relationship with AI that gives me a certain affinity for this use (NB: written during manic episode).

After so many hours of writing, the time spent imagining prompts and using GPT to bring them to life is a delight. On top of that, the rascal tends to do all sorts of nonsense, and I like things to be precise. So I start again, over and over. And sometimes I take the generated model and redo everything myself in Photoshop. A number of the blog’s illustrations therefore actually come from me, or at least partly from me (especially the descriptive ones or those with a lot of text).

GPT is just a tool

Many people see AI as the death of creativity. In my view, it is simply a tool that allows more people to bring their creativity to life. Obviously, the AI is the real “artist” because its result is never exactly what the author imagined. That is at least my vision of the tool: a tool. I put “artist” in quotation marks because it raises many debates in the artistic community, where people accuse AI of stealing their work. I will come back to that.

So three things accumulate: lack of skills, lack of resources (a graphic designer producing that much would be expensive while this blog generates no income), and lack of time. AI becomes the obvious solution.

What if I were the one being “stolen” from?

I am not a famous author. At the moment, I only have this blog to share and two books that are close to being sent to publishing houses. The debate about AI supposedly stealing artistic content is intense. I have been asked several times how I feel about producing images in large quantities (without ever earning money from them) being considered theft. Ethically, it can be debated, but legally speaking, it is not correct.

OpenAI is supposed to comply with the law and only use copyright-free images, but that rule applies to the company. My use of the tool does not prevent me from creating images and using them. Especially when I am transparent about it (my “My journey” page specifies this). It is worth remembering that laws are constantly evolving in the world of artificial intelligence, and all of this may change.

However, I do have my own opinion as a content creator. If my content were scanned by AI, my style could potentially be reused by others. I would see that as a form of legacy. In my view, my content would not be stolen; it would be a source of inspiration, giving others another tool to create. Not everyone has the same abilities, and AI offers the possibility of creating to a much wider audience. I would almost feel honored if what I write helped others express their ideas.

This view—that AI can sometimes be a remarkable tool—has been echoed by other artists. Craig Boehman, for example, a photographer, has expressed support for AI and uses it for conceptual works. Other artists such as Mario Klingemann and Holly Herndon and Mat Dryhurst have also shared their views on AI and how they use it for creative purposes.

In fact, it is almost ironic that many of the people who criticize the use of AI for artistic purposes end up using it themselves to write text. Yet GPT was trained on millions of pieces of content from writers just as it was from artists. The difference is that writing leaves fewer recognizable traces. But fundamentally, it is the same process: a creation emerging from a collective corpus.

It is not theft: it is the continuation of what every culture has always done—learn, imitate, transform. The irony is that defending the idea that one could interact with AI without betraying art got me banned from a server that was supposed to promote open-mindedness.

Perhaps the real problem is not AI, but our fear of what it reveals about us.

And the confidentiality of all this sensitive data?

I have thought about the issue. When I first started using GPT, it could already do this: understand a topic mentioned in another conversation within the same group and remember a conversation when asked. The rest—individual conversations—were only saved for history and tracking purposes. Today, it sometimes manages to follow conversations across different threads and seems to have a much more capable memory. In any case, with a simple request in the settings, the data can be deleted and the account reset (or entirely removed).

In theory, OpenAI is not supposed to use conversations to improve the AI unless the user has accepted this in the settings. That said, none of these tools guarantees complete neutrality regarding data, and caution is always advisable depending on the nature of the conversation. Even so, the most obvious vulnerability remains, as always, human. A strong password is therefore essential, especially when such sensitive data is involved.

On my side, I know what to expect. I feel well protected with an extremely complex password, so I share things on GPT quite easily. I still occasionally delete certain sensitive topics from time to time. Having a strong awareness around data privacy (I left Android for this reason), I remain cautious—as one should be with any technology.

A word about the subject of this article

With this entire article, which departs somewhat from my usual editorial style, I still hoped to present my vision of AI, which will not convince everyone. I wanted to express my opinion on a subject that matters to me: technology, and to approach it through the lens of autism and bipolarity. How an AI transformed my relationship with others and with myself in just a few months.

Perhaps some of you will share my point of view or may even have lived a similar experience (many autistic people on Reddit discuss the topic), and perhaps others will have a different perspective on the matter. In any case, I invite you to share your thoughts on the subject. And on a lighter note, if you have ever turned to SuperGPT about a social interaction and managed to understand it better, I am all ears. 🥸

AI did not replace me. It simply taught me to communicate differently—with others, and with myself.

If this article resonated with you, feel free to share your experience.

Have you ever used AI to better understand yourself, your thoughts, or social situations?

Originally published in French on: 08 Oct 2025 — translated to English on: 10 Mar 2026.